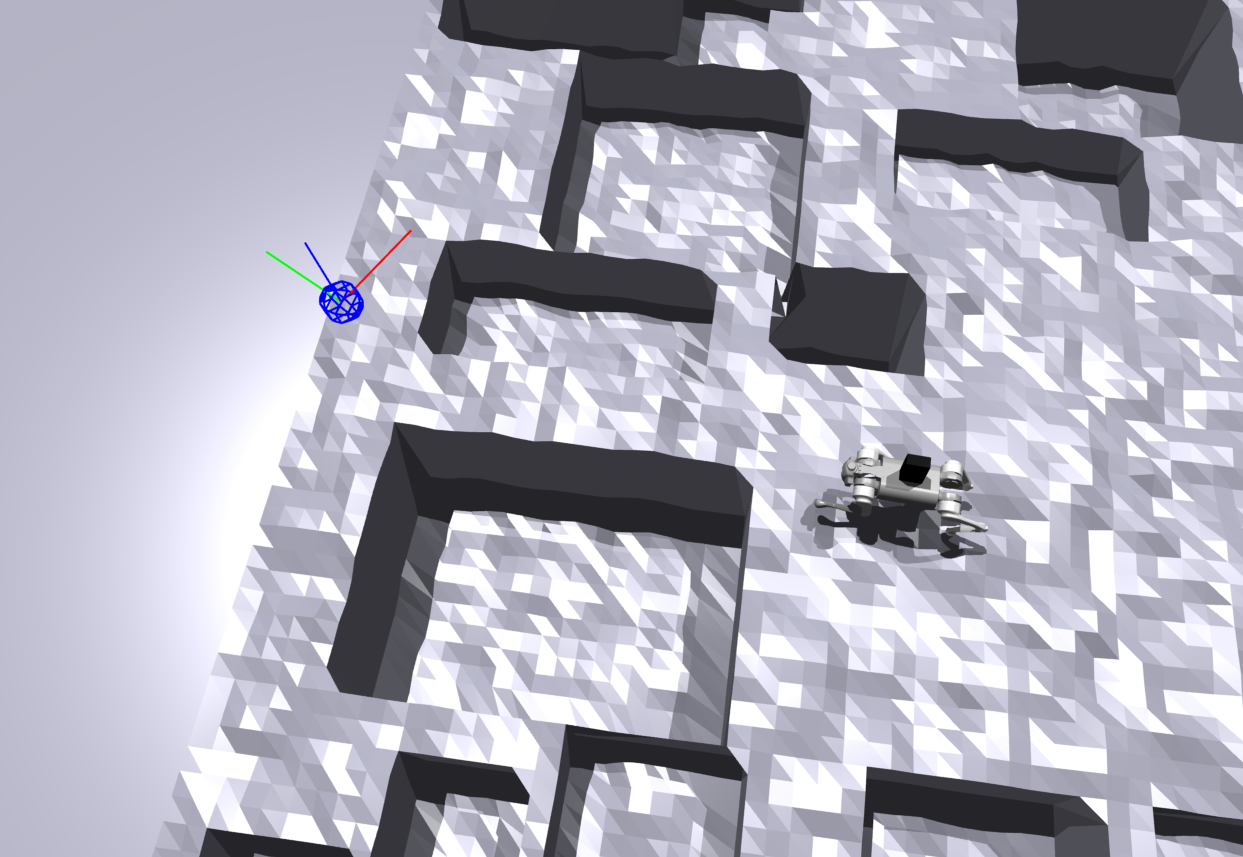

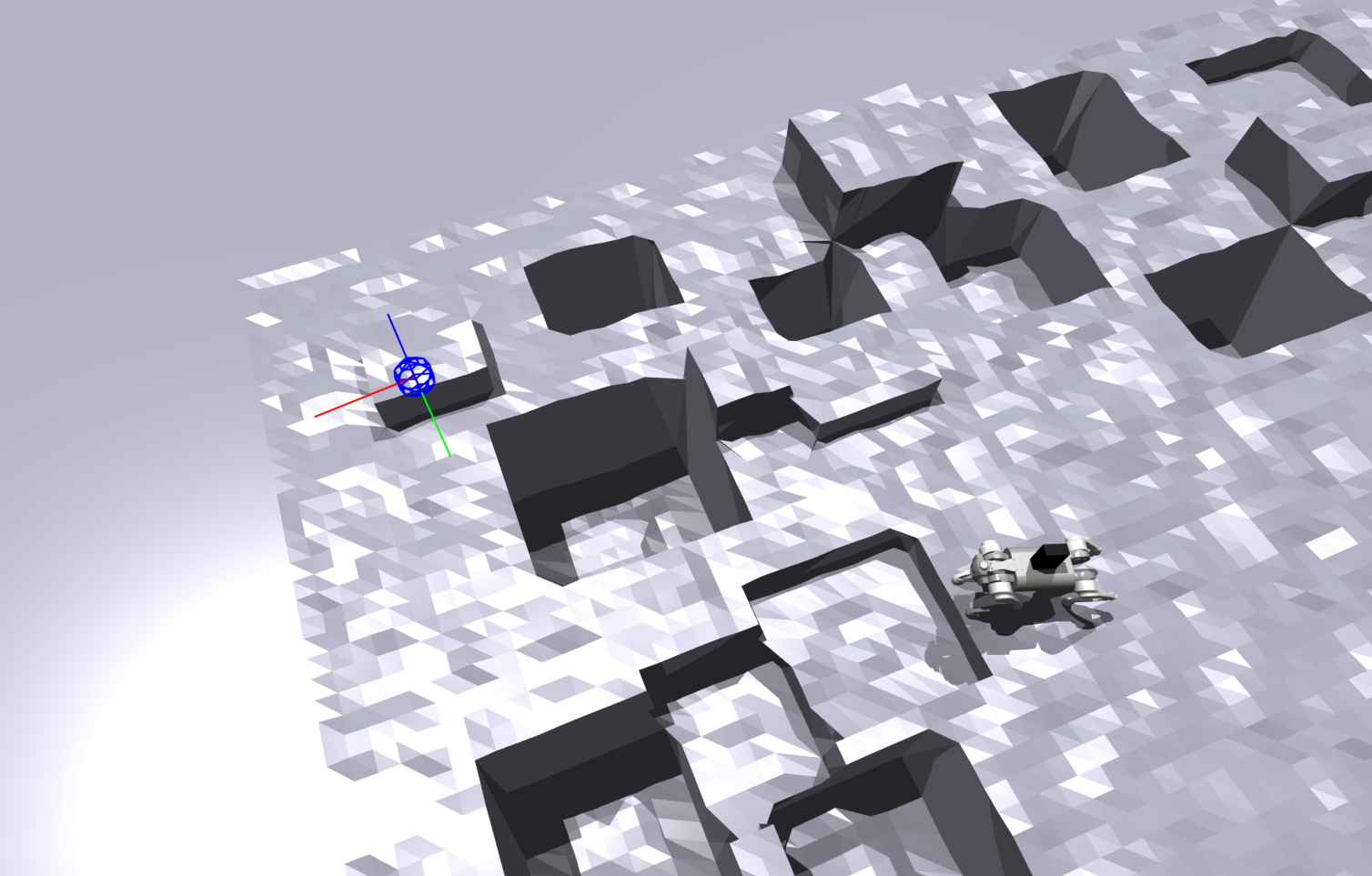

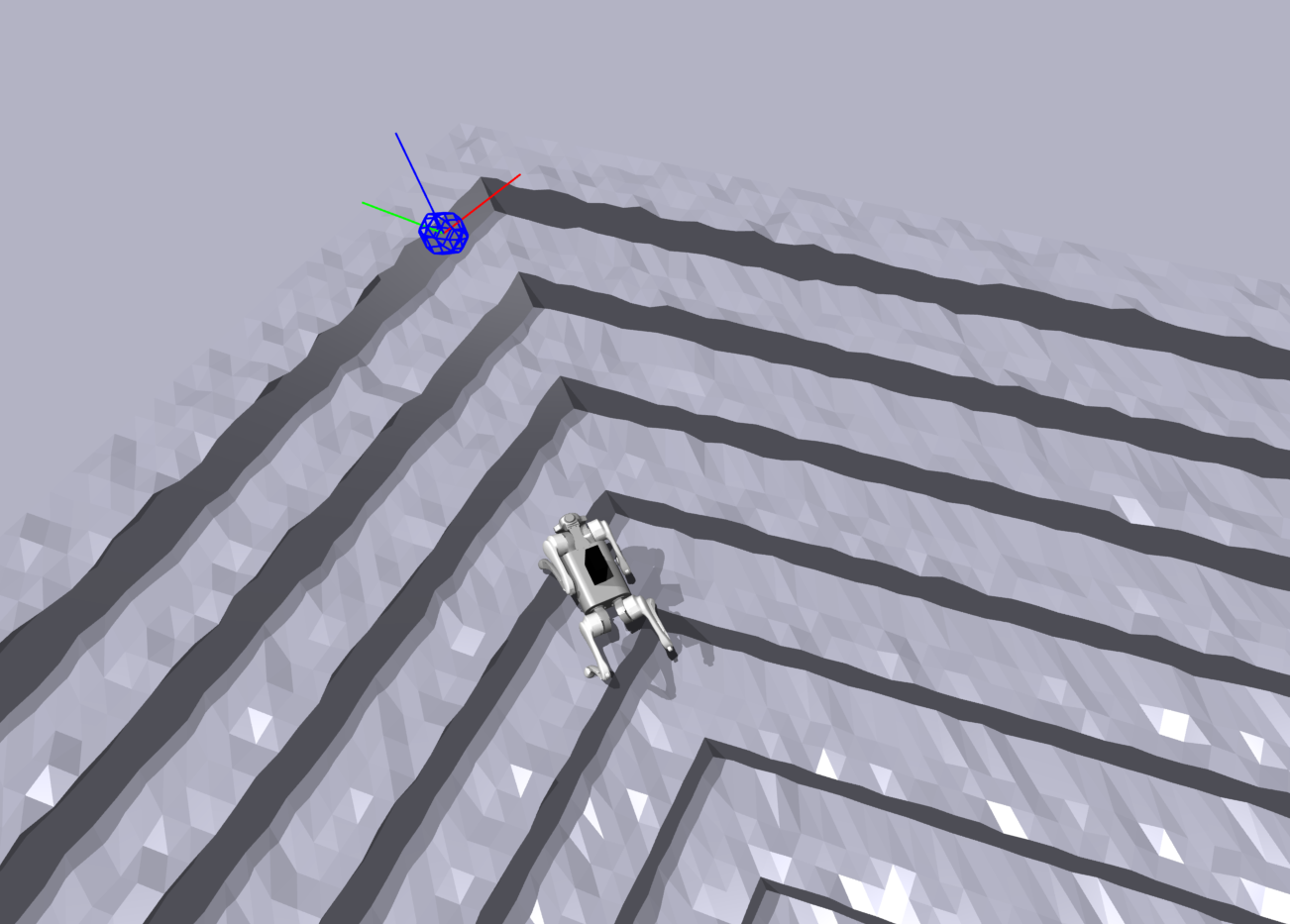

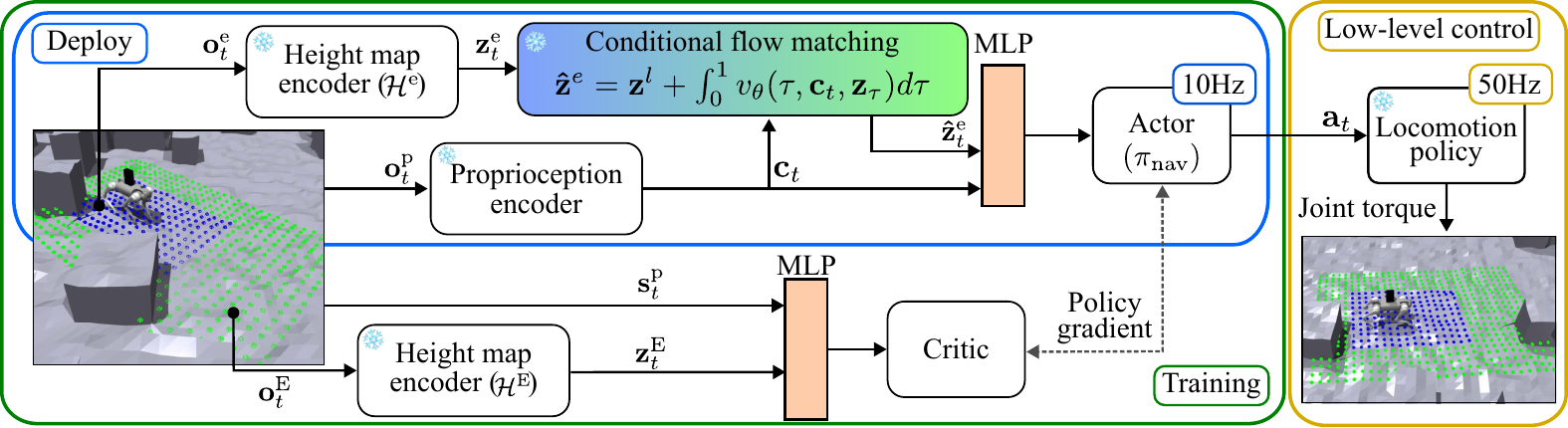

Method

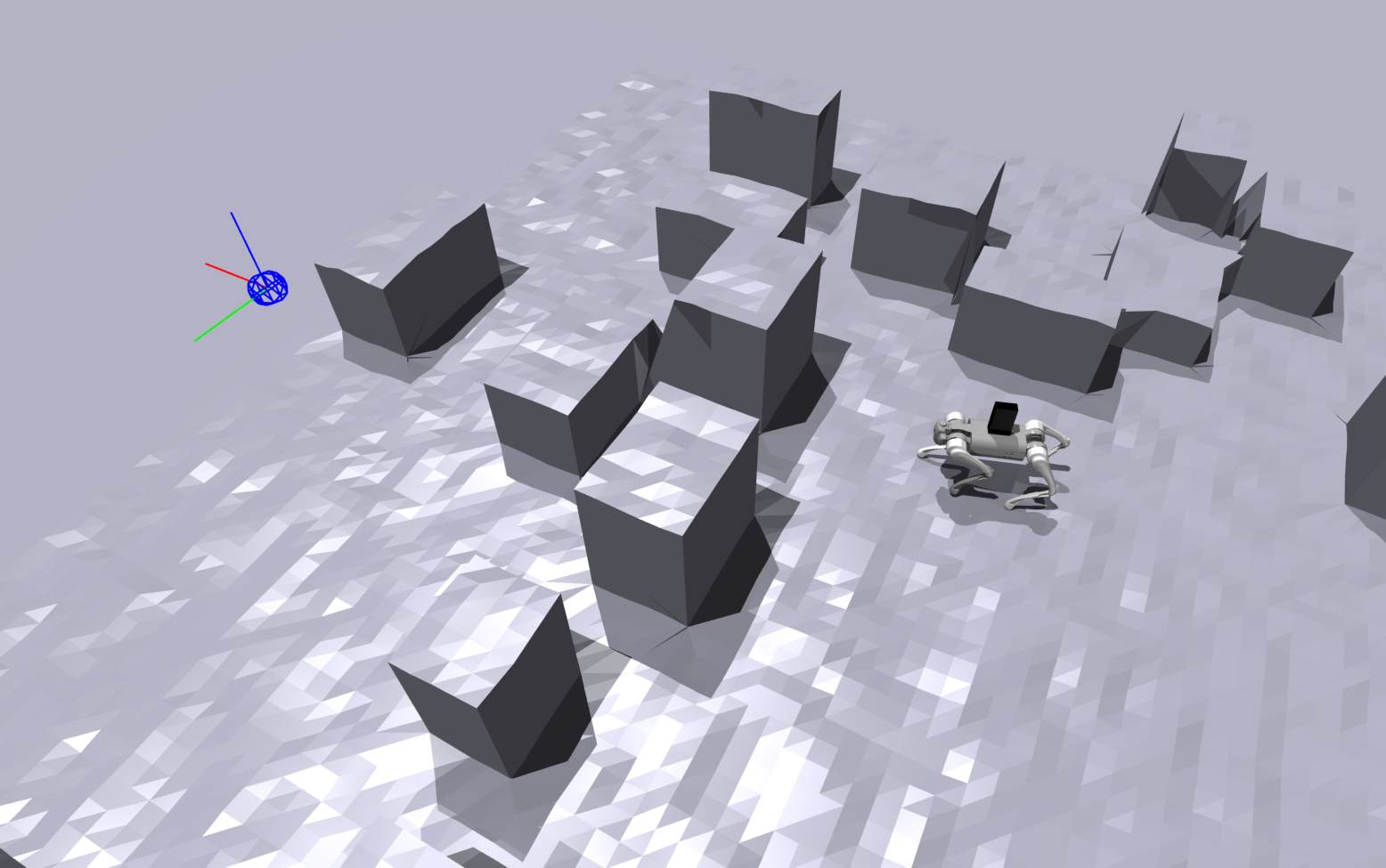

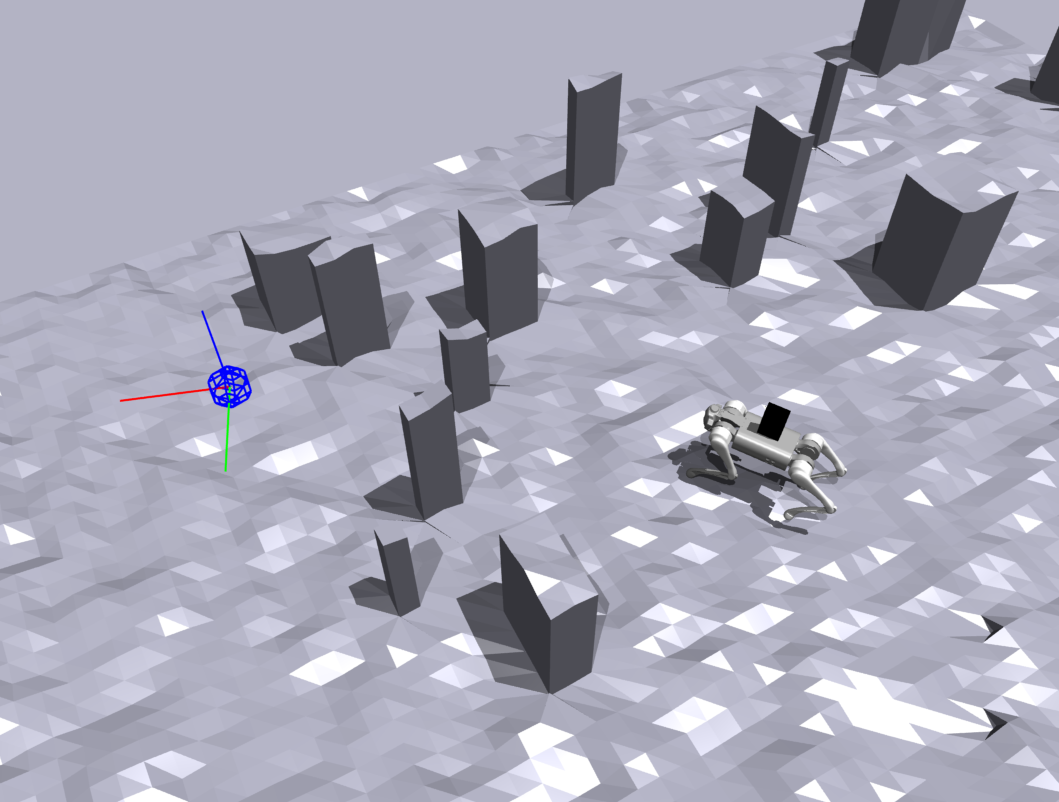

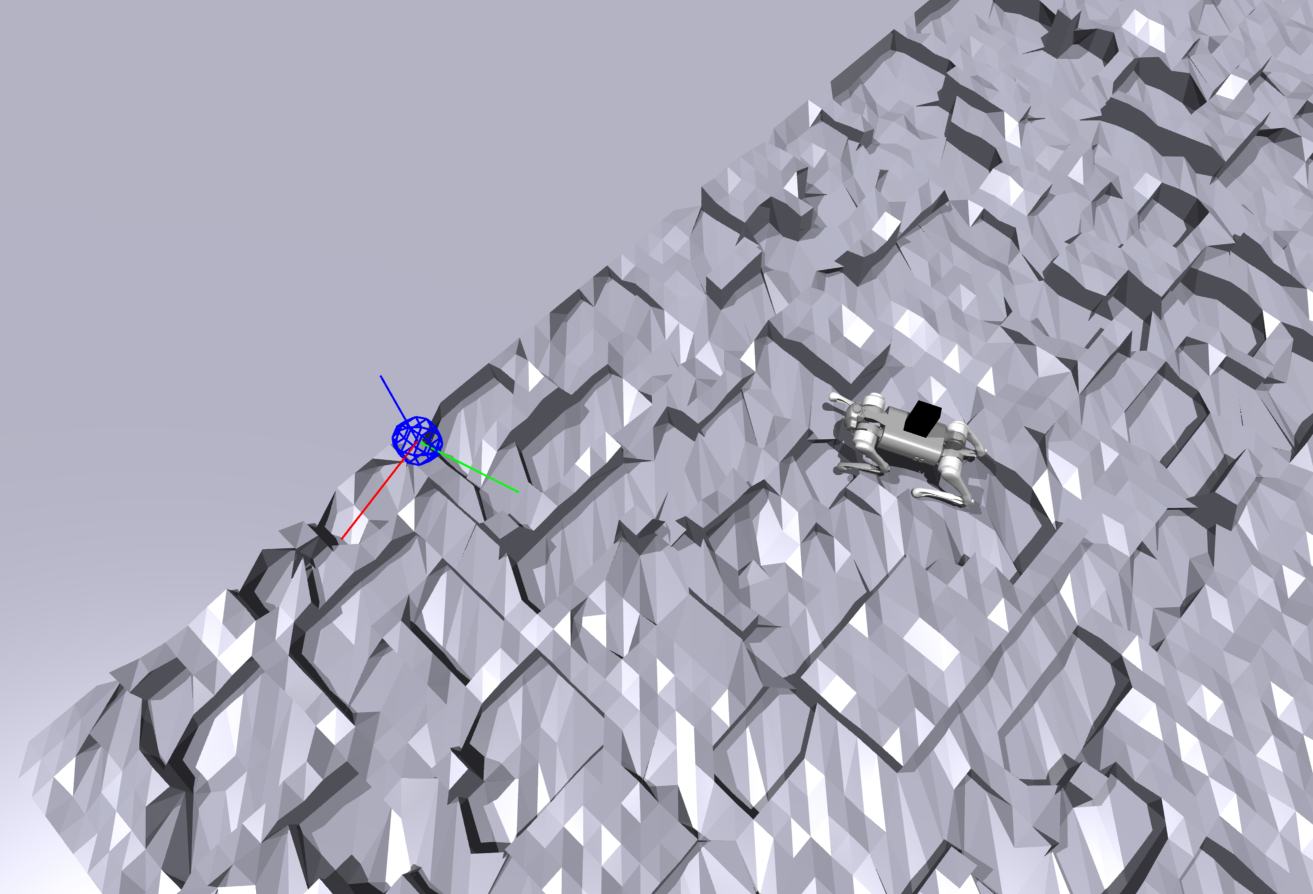

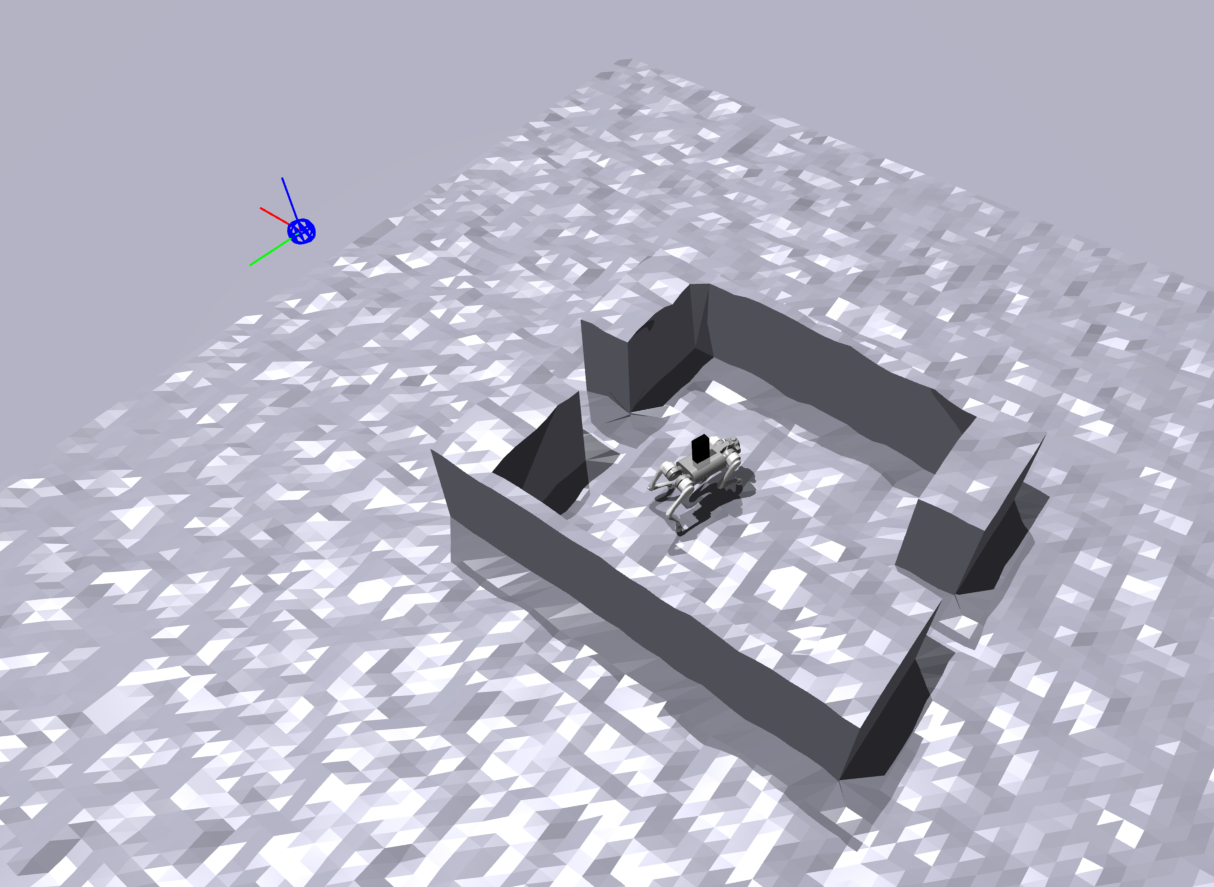

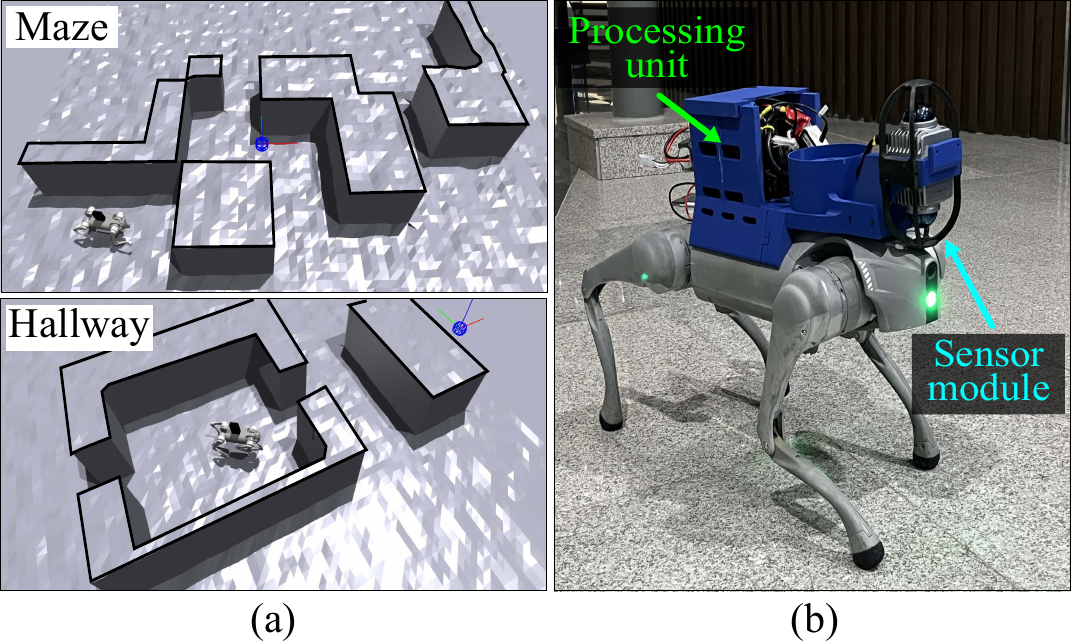

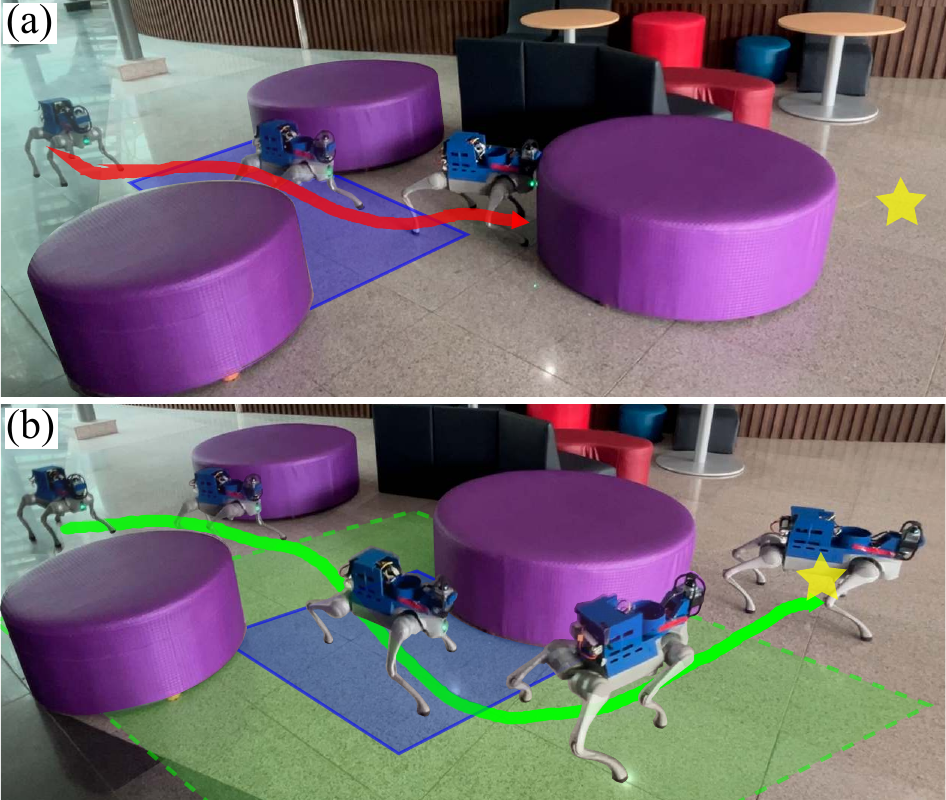

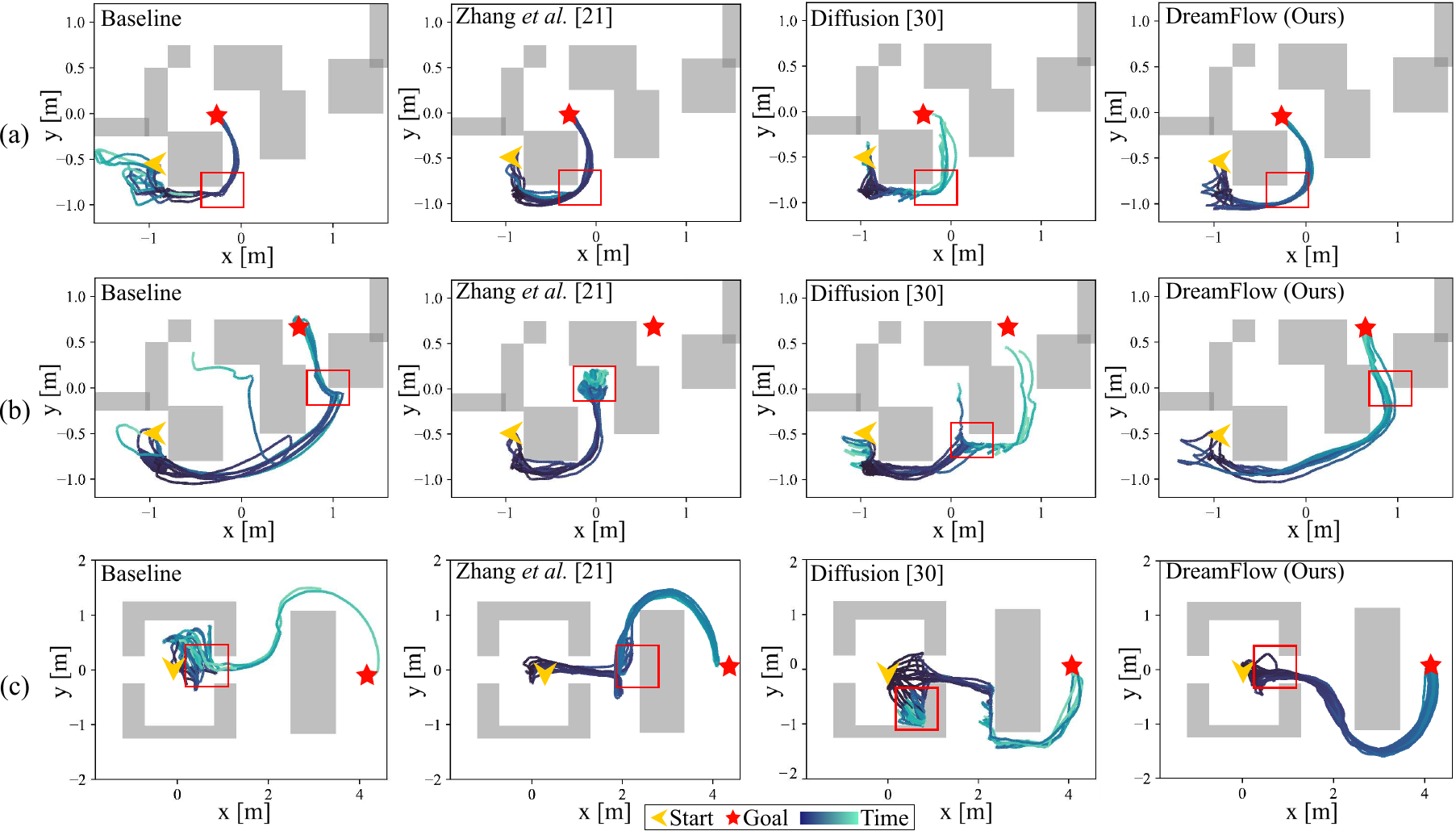

The overall architecture of DreamFlow is designed as an asymmetric actor-critic framework. The actor uses a local height map to derive a local environmental latent representation. The pre-trained CFM module then predicts an extended latent vector—representing terrain beyond the sensor range—conditioned on the robot's proprioceptive context. The navigation policy takes both the local and predicted extended latent as input to produce velocity actions, while a pre-trained locomotion policy serves as a low-level controller.

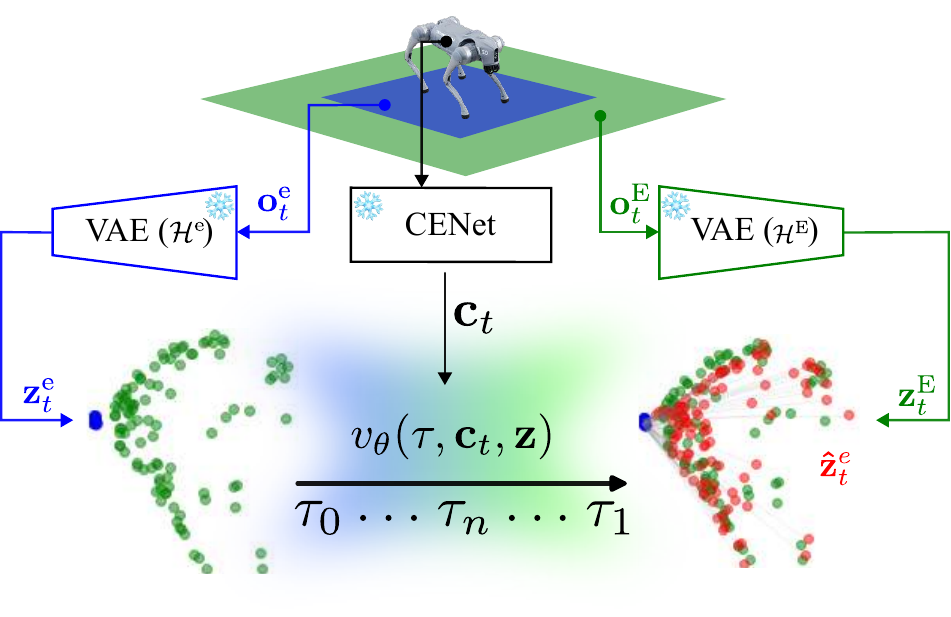

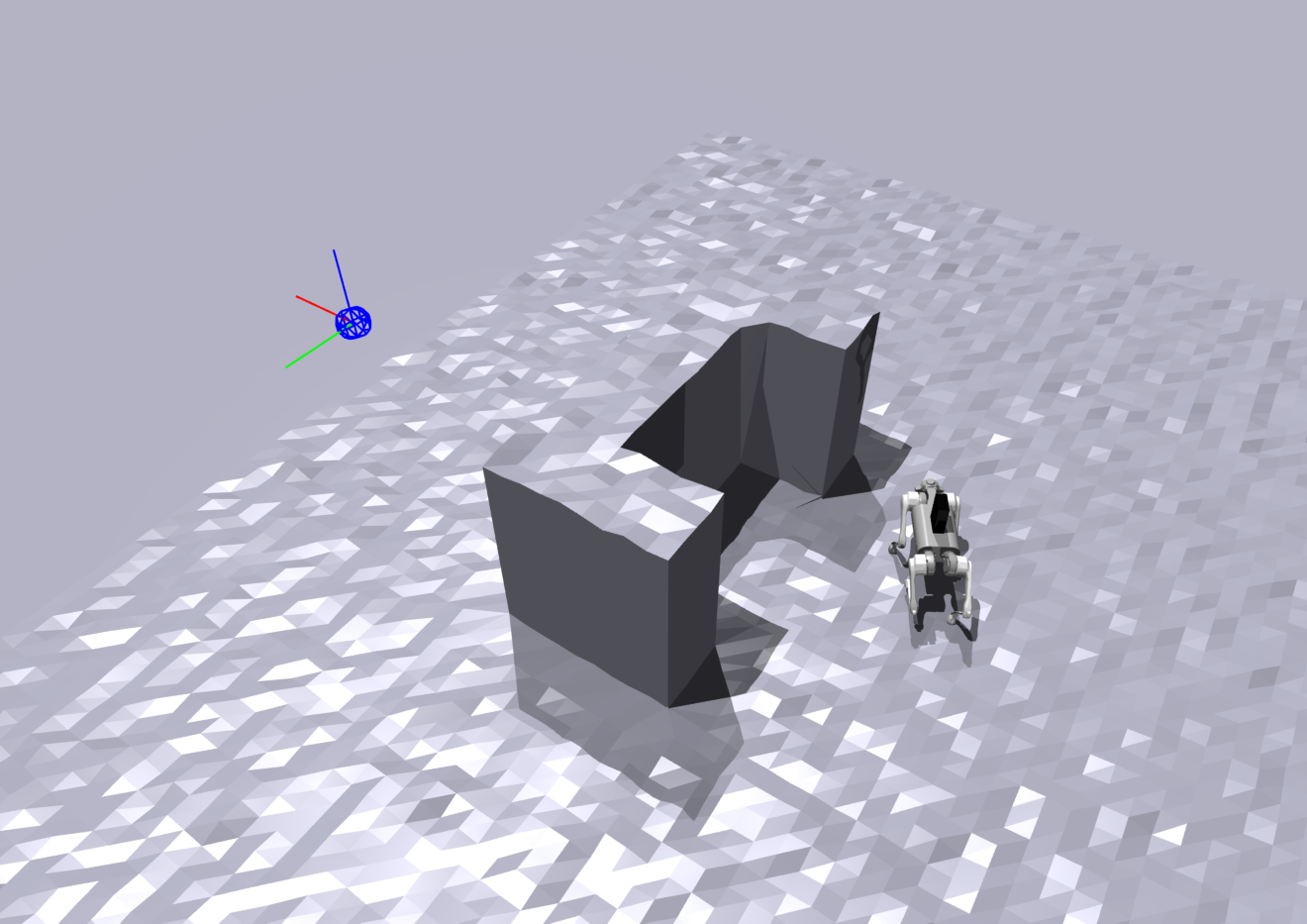

CFM Training Pipeline

The CFM training pipeline collects latent pairs from local and extended height maps using pre-trained VAE encoders. The velocity field learns to transport the local latent towards the extended latent, conditioned on the proprioceptive context. This enables the model to "dream" about unseen terrain from partial observations.